Every Upload Is a Decision

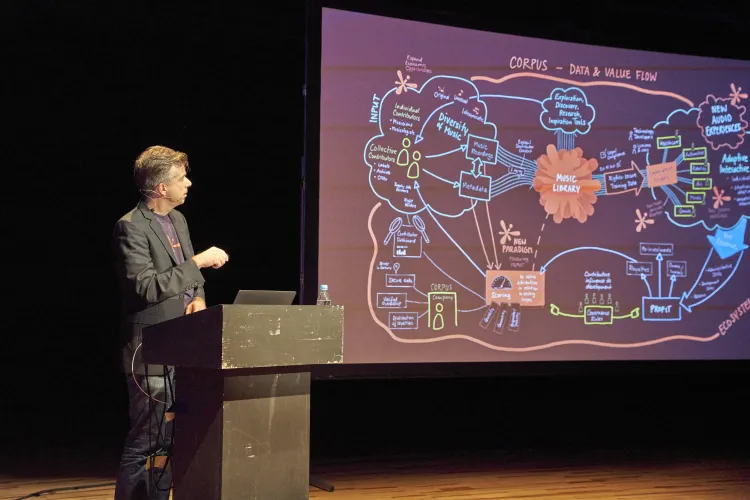

In March 2026, CORPUS will open its contribution platform to a public beta. Musicians will be able to upload original recordings, stems, and additional files like MIDI to a shared library designed for AI training — and receive compensation based on how their work enriches the dataset. The premise sounds simple. This article is about why it isn't.

The deceptive simplicity of the task

The core idea behind the CORPUS contribution app can be stated in one sentence: musicians upload music, the system evaluates it, and their contributions earn them a stake in the revenue their work helps generate. Everything else follows from taking that sentence seriously.

We expected the hard problems to live in the scoring system — the question of how to measure quality, originality, and diversity. That is indeed hard, and we have written about it. But before we could even begin to evaluate contributions, a prior set of questions forced itself into view. Questions about what happens between the moment a file leaves a contributor's computer and the moment it enters the library.

These questions turned out to be more consequential and more revealing than we anticipated.

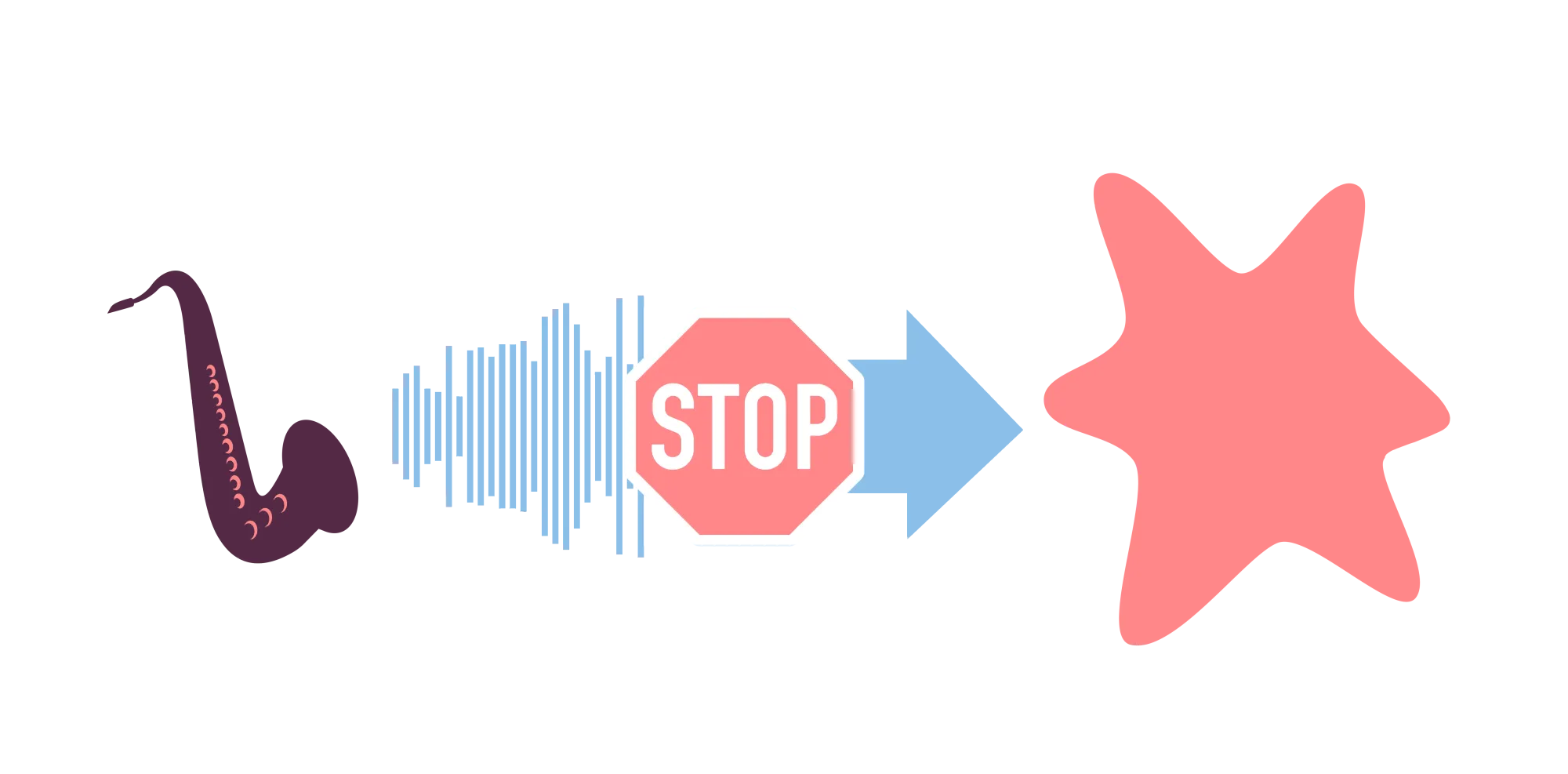

Filtering as a sequence of recognitions

The first question is elementary: is the uploaded file actually music?

This sounds trivial. It is not. People upload field recordings, corrupted audio, test files, spoken word, sound effects. A training corpus contaminated with non-musical material degrades model quality and wastes processing resources. So we built a classifier that distinguishes music from non-music — including technically broken files — and it works well.

Except for noise music, a genre where distortion, feedback, and sonic extremity are not defects but the point. Several people on our team care deeply about this music. The classifier flags it: that is correct behavior from a signal-processing perspective and wrong behavior from a musical one.

This is not an edge case. It is the first instance of a pattern that repeats at every layer of the system: automated filters encode assumptions about what music is, and those assumptions will always be contested. The response cannot be to make the filter smarter. The response must be structural. In this case: a contributor whose work is flagged can appeal. If the appeal is legitimate — a noise artist, an experimental composer — we disable that specific filter for their account. The system adapts to the contributor, not the other way around.

The same logic extends to every subsequent filter.

Duplicates. We do not want the same recording to appear twice. Detection runs on audio fingerprinting — not on titles or filenames, which can be changed to game the system. When a duplicate is found, the question immediately becomes: who is the rightful owner? The contributor may have uploaded their own track twice by accident. Or someone else uploaded it first. Both cases require resolution, so we built a dispute flow that routes conflicts to human review with full audit trails.

Covers. Fingerprinting catches near-identical recordings. It does not catch a singer performing someone else's song on an acoustic guitar. For a corpus that promises rights-cleared training data, this is a critical gap. Standard content identification systems — the kind that power YouTube's Content ID — cannot help here, because the recordings are too different. We partnered with Aurismatic, a company whose technology was originally built to identify songs performed live at concerts, where the audio bears little resemblance to the studio version. The resulting prototype for our cover detection system currently recognizes the thirty thousand most covered songs. It is not comprehensive. But it is a meaningful first barrier.

AI-generated music. Detecting synthetic audio is not unsolvable — it is a cat-and-mouse game. Each generation of models can be learned and identified; then a new generation appears, and the detector must catch up. Building a system that stays current permanently is a separate company's problem, and not one we wanted to take on. To our surprise, a relatively modest effort produced a detector that reliably identifies tracks generated by Suno through its second-most-recent model version. It is not future-proof. It is not meant to be. It is a checkpoint — one layer in a system where no single filter carries the full burden.

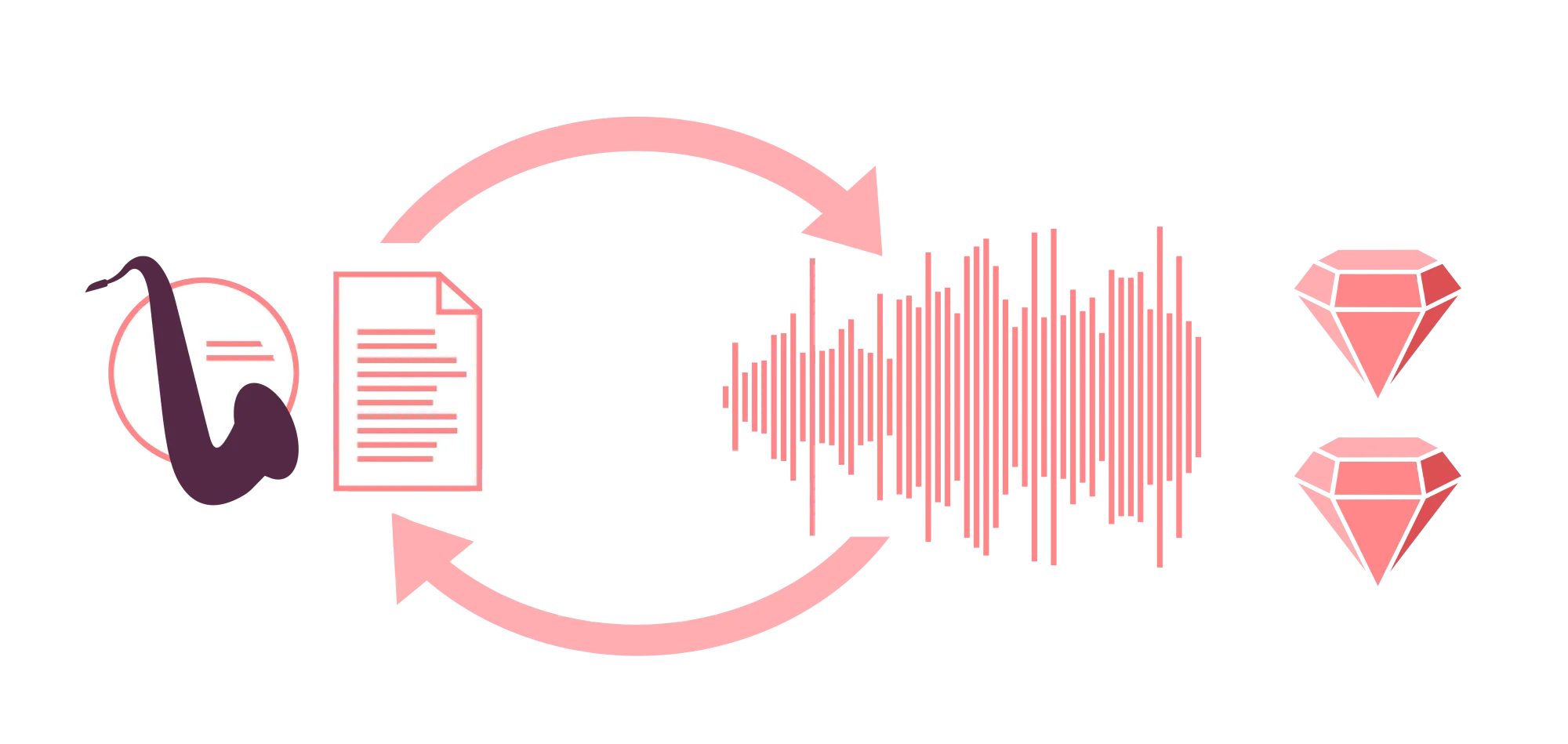

Stem verification. Contributors earn additional points for uploading stems alongside their master recordings, because separated tracks can be more valuable for training. This creates an incentive to upload unrelated audio files as "stems." So we verify that uploaded stems correspond to the master — not by filename, but by waveform analysis. If they don't match, the upload is flagged.

Vocal detection and personality rights. If a track contains singing, a further legal dimension opens. Vocals carry personality rights that go beyond copyright. Our system detects whether a track contains vocals. If it does, the contributor's collaboration agreement must include a singer who has explicitly consented to the use. Without that consent, the track does not enter the library.

What none of this required

A recurring realization during development: none of these filters are exotic technology. Non-music detection, fingerprint-based deduplication, cover recognition, AI detection, stem verification — each is buildable with current tools at reasonable cost. The engineering was careful, not heroic.

This makes the absence of such systems elsewhere harder to explain. Major upload platforms process millions of tracks with only fragmentary checking whether the audio is music, whether it duplicates existing content, whether it is a cover, or whether it was generated by an algorithm. The result is a music ecosystem flooded with redundant, synthetic, and rights-uncertain material — problems that are then treated as inevitable consequences of scale.

They are not inevitable. They are consequences of choosing not to filter. We chose differently, and the cost was manageable. The filters we built would function on any upload platform. That they don't exist on most is an infrastructure decision, not a technical limitation.

Splitting compensation before the upload, not after

When multiple people create a track together, compensation must be split. In CORPUS, this happens before the upload — not retroactively.

A contributor creates an agreement that defines a simple percentage split among all parties involved. Each party is invited by email to confirm their share and to consent explicitly to the use of the work as AI training material. The agreement is captured as an immutable snapshot at the moment of upload. If the template is later changed or deleted, past uploads are unaffected.

This is where the system's ambitions collide with usability. Every consent step adds friction. And friction, past a certain threshold, kills adoption — no matter how justified the underlying principle. This is not only our problem. It is the central political challenge of the entire music-AI licensing landscape: collecting societies, regulators, and industry bodies are all designing complex permission architectures. But a system that is correct and unused is worse than a system that is imperfect and adopted. The speed of technological development does not wait for consensus to form or for legislation to pass.

We tried to make the process as frictionless as possible — automated onboarding, single-click consent, reusable templates. Whether it is simple enough to be accepted by working musicians is the open question of the beta.

One point, however, was non-negotiable. If a track contains vocals, the singer must be named in the agreement and must have explicitly consented. Vocals carry personality rights — a dimension so intimate that no efficiency argument can override it. This is the line we drew: the system can tolerate imperfection elsewhere, but not here.

The semantic layer: a musicological problem before a technical one

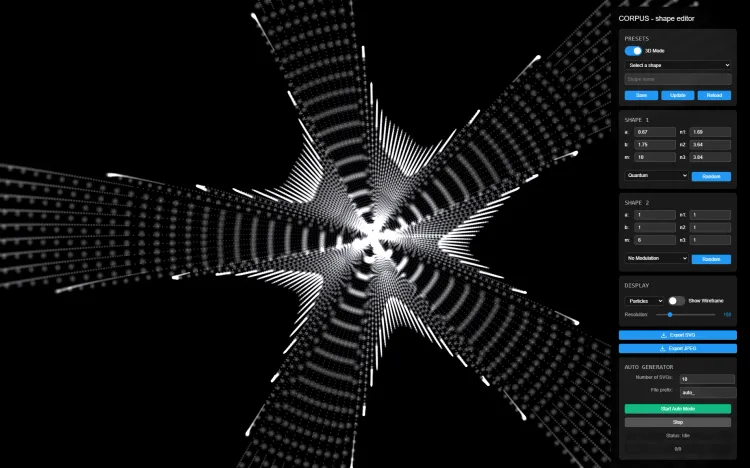

Beyond filtering and rights, every contribution passes through an annotation pipeline that generates detailed descriptions of the music — not just tags (genre, mood, tempo) but structured narratives covering what the music sounds like, what it evokes, where it belongs, and how it might function in context.

This pipeline runs entirely on self-hosted infrastructure. The music never leaves our servers. The underlying models are open-weight and free for commercial use, hosted in Germany. What is proprietary is the architecture around them — the way these models are composed, sequenced, and prompted to produce descriptions of a depth and consistency that the models alone do not deliver.

We did not expect this to work.

A year ago, the prevailing assumption — shared by many researchers and engineers we spoke with — was that generating meaningful textual descriptions of music was not feasible. Music is too subjective, too context-dependent, too resistant to verbal formalization.

That assumption turned out to be half right. The problem was not that language cannot describe music. The problem was that no one had clearly defined what a useful description of music should contain. The question had been treated as a technical challenge — how to extract features from audio — when it was first a musicological one: what are the dimensions of musical meaning that a description should capture?

We assembled a small team of musicologists and composers to answer that question. Not to build software, but to formalize the act of description itself. What would you tell twenty trained annotators to do, consistently, across thousands of tracks? What categories hold? What collapses? Where does subjective interpretation begin, and where does it end?

The formalization that emerged from this work became the specification for automation. When the underlying language models proved capable enough to follow that specification reliably, the pipeline became possible. The quality of the output is a consequence of the quality of the question — not of the scale of the model.

Controlled growth, not platform logic

CORPUS is not trying to maximize uploads. We are trying to build a library whose integrity we can guarantee. This means knowing our contributors.

The platform distinguishes between visitors and contributors. Both can upload and explore the annotation pipeline. The difference is that only a contributor's uploads enter the CORPUS library. The transition requires a lightweight vetting process: does this person have a credible production history? Do they make music that predates the generative AI wave? What kind of work is it?

This is not scalable in the way platform economics demands. It is deliberately slow. The alternative — open registration and retroactive moderation — is precisely the model that has produced the content crisis now visible on every major music platform.

We will need to revisit this as the corpus grows. Some of the vetting may become automatable. But the principle is fixed: the quality of the dataset is bounded by the quality of the contributor base, and quality requires knowledge, not just volume.

What the app reveals

We asked the questions that upload infrastructure usually leaves unanswered: what counts as music, who owns it, whether it is original, whether it is human, whether its creators have consented. Most of these systems work well — in some cases, better than we expected. But gaps remain. Production quality assessment — the ability to reward not just what a track is, but how well it is made — is the most significant. We plan to close it in the coming months.

Upload platforms are not passive infrastructure. They are decision architectures. Every file that enters a system either passes through a series of explicit judgments — is this music, is it original, is it human, have its creators consented — or it passes through the absence of those judgments. Both are decisions. The music industry has, for the most part, chosen the second. The consequences are now visible everywhere: flooded catalogs, uncertain rights, eroded trust, and a growing dataset crisis that AI has made urgent but did not create.

CORPUS chose differently. Not because the technology demanded it, but because the promise we want to make — to contributors, to licensees, to the models trained on this music — demanded it. The contribution app is where that promise becomes operational.

The public beta begins in March 2026. Musicians can join the waitlist.